How I Built a Compliance AI Agent Skills with ISO 27001 + EU AI Act

Building technology products today feels like navigating a legal minefield.

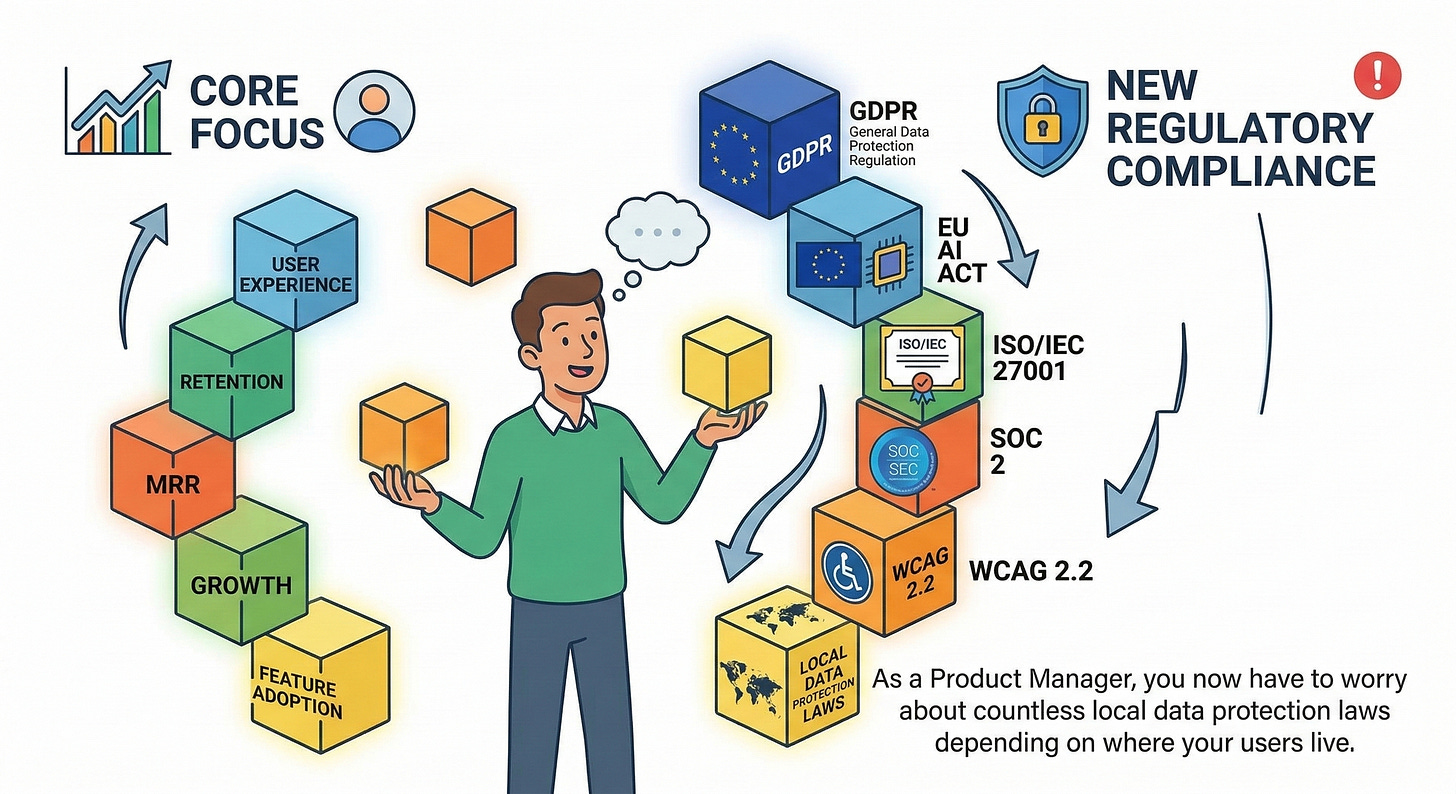

If you’re a Product Manager, you don’t just have to worry about user experience, retention, and MRR. You now have to worry about GDPR, the looming EU AI Act, ISO/IEC 27001, SOC 2, WCAG 2.2, and countless local data protection laws depending on where your users live.

The traditional workflow? Write your Product Requirement Document (PRD), send it to Legal/Compliance, wait 2 weeks, get a heavily redacted document back, rewrite the PRD, and delay your launch.

I was tired of this slow, reactive approach to compliance. So, I built a solution: Skills for AI into a Senior Compliance Expert that injects regulatory guardrails directly into the PRD.

Here’s how I built the SafeAI-Global PRD Agent — and how it completely changed the way I write specs.

🛑 The Core Problem: Ignorance is Expensive

Most PMs (myself included, in the past) treat compliance as an afterthought. We build features first, then try to “add security later”.

But with the EU AI Act becoming enforceable (banning unacceptable risk AI systems and heavily regulating high-risk systems) and fines reaching up to €35 million or 7% of global turnover, “afterthought compliance” is no longer viable.

Furthermore, enterprise clients increasingly demand:

ISO/IEC 27001 (Information Security Management)

ISO/IEC 42001 (AI Management System)

SOC 2 Type II reports

WCAG 2.2 Level AA / ADA accessibility compliance (especially with the European Accessibility Act coming in June 2025).

Expecting a single PM to hold all of this legal nuance in their head is impossible. Expecting an AI to do it without specific, expert-level system instructions leads to generic, hallucinatory advice.

The Architecture: A Multi-Skill Markdown

Instead of building a bulky SaaS platform or a dedicated web app, I chose to build Skills. In the era of autonomous agents and AI IDEs (like Cursor, Windsurf, or GitHub Copilot), the best way to distribute AI capabilities is through structured system instructions.

I built the engine using a “Multi-Skill” architecture available on skills.sh:

SafeAI-Global PRD Agent (The Core Router): A massive instruction set covering 35+ global jurisdictions. It acts as the brain, identifying the product’s target region and applying cross-border data transfer rules.

SafeAI GDPR Expert: Deep dives into Article-by-Article GDPR mapping and EU AI Act risk categorizations.

SafeAI HIPAA Expert: Maps features to HIPAA technical safeguards and FDA Software as a Medical Device (SaMD) rules.

SafeAI FinTech Compliance: Enforces PCI-DSS v4.0, AML/KYC, and PSD2/SCA for financial products.

SafeAI ASEAN Data Protection: Focuses on Southeast Asian local laws (Vietnam’s PDPD, Singapore’s PDPA, etc.).

By splitting the logic into multiple skills, I ensured the agent doesn’t suffer from “context dilution.” It only loads the specific legal frameworks relevant to the product.

Operationalizing ISO & SOC 2

The hardest part wasn’t telling the AI what the laws were; it was telling the AI how to apply them to product features.

In the system prompt, I mapped abstract frameworks to actionable PRD sections:

ISO/IEC 27001 (Annex A) maps to specific technical requirements (e.g., A.9 Access Control translates to mandatory MFA features in the PRD).

ISO/IEC 42001 forces the AI to outline an “AI Impact Assessment” section if the product utilizes machine learning.

SOC 2 Trust Service Criteria ensures the agent always defines Availability SLAs and Processing Integrity checks.

The result is an output that legal and security teams actually respect. Instead of saying “ensure data is secure,” the PRD states: “Implement AES-256 encryption at rest (SOC 2 Confidentiality) and enforce role-based access control (ISO 27001 A.9.2.1).”

The “Aha!” Moment: Compliance Depth Selector

During testing, I hit a massive UX roadblock: Overkill.

If I asked the agent to write a PRD for a simple internal “Reset Password” feature, it would generate a 5-page compliance audit referencing the EU AI Act and Section 508 Accessibility. It was exhausting.

To fix this, I implemented Step 0: Choose Compliance Depth.

Now, when you interact with the agent, it asks you what level of rigor you need:

📝 Standard PRD: Fast, feature-focused, no compliance scanning. (Great for MVPs or internal tools).

🛡️ Smart Compliance (Default): The agent auto-detects your target region from the prompt and applies only the relevant laws.

🔒 Full Compliance Audit: It runs the full gamut—ISO, SOC 2, WCAG, and global frameworks. Maximum coverage for enterprise/regulated products.

This made the tool 10x more usable in daily workflows. It adapts to the context rather than forcing a heavy legal audit on every tiny sprint task.

Security by Design: Zero Code, Zero Risk

A tool that promises security shouldn’t introduce supply-chain risks. That’s why the SafeAI suite is purely stateless Markdown.

No executable code.

No network connections or API calls.

No data collection.

It relies entirely on the host LLM (Claude, ChatGPT, or your IDE’s AI) to process the instructions locally, ensuring that your company’s proprietary PRDs are never leaked to a third-party server.

🚀 Try It Out

If you want to stop guessing about compliance and start building enterprise-ready products from day one, you can install the agent directly into your terminal or AI IDE.

If you have npx installed, simply run:

npx skills add datht-work/safeai-global-agentAlternatively, you can just copy-paste the raw SKILL.md into Gemini Gems, ChatGPT GPTs, or Claude Projects.

Compliance used to be a blocker. With AI, it’s now a competitive advantage. Build fast, but build safe!

If you found this useful, feel free to star the SafeAI-Global repo on GitHub and contribute your local regulations!